100% Inhuman Made Badges

Gary Hall, in collaboration with COPODE

What is familiar and well known as such is not really known for the very reason that it is familiar and well known.

- G. W. F. Hegel, Phenomenology of Spirit

Spouting quotes from Mao, Castro, and Che Guevara, which are as germane to our highly technological, computerized, cybernetic, nuclear-powered, mass media society as a stagecoach on a jet runway at Kennedy airport?

- Saul Alinsky, Rules for Radicals

The Society of Authors (SoA) recently introduced a certification project, intended to assist readers with distinguishing books written by humans in a publishing landscape that is increasingly populated by AI-generated material. Framed as a way to ‘help protect an entire profession’, the scheme – the first of its kind established by a UK trade association – allows SoA writers to register their titles and download a ‘Human Authored’ logo for display on the back covers.

It echoes a comparable initiative unveiled in the United States by the Authors Guild in early 2025, and has attracted support from prominent writers such as Malorie Blackman and Mary Beard. The certification project and its accompanying logo were launched at the London Book Fair in March 2026, where visitors were also given copies of an ‘empty’ book. Conceived as another protest against the use by AI companies of authors’ work – often downloaded from ‘pirate’ libraries – without permission or payment, the volume, titled Don’t Steal This Book, contained nothing but a list of writers’ names, approximately 10,000 in all.

Given all this, we’re aware some of you reading this may find what follows about artificial intelligence eccentric, even provocative. After all, wouldn’t it be easier all-round simply to go along with the emerging critical consensus and maintain:

- that AI is best understood through the lens of a ready-made political framework borrowed from the past: Thatcherism (Dan McQuillan), fascism (McQuillan), techno-fascism (McQuillan yet again), Marx (Nick Dyer-Witheford, Atle Mikkola Kjøsen and James Steinhoff), and specifically that collective form of labour Marx refers to in the ‘Fragment on Machines’ as the ‘general intellect’ (Matteo Pasquinelli)?

- that AI is nihilist (David Golumbia), stupid (James Bridle), parrot-like (Emily Bender and co.), synthetic shit (Kate Crawford)?

Evidence in support of this view includes research from 2025 suggesting that ‘21-33% of YouTube’s feed may consist of AI slop or brainrot videos’ designed primarily to maximise audience attention. Such material has been described as the ‘“spam” of the video-first age’. (AI slop refers to low-quality, inaccurate or inauthentic material that is over-reliant on generative AI tools such as ChatGPT or Claude in its creation; the related scholarly slop refers to AI-hallucinated references in academic books and journals and their spread across other texts; while brain rot denotes content that has the effect of eating away at the viewer’s intellectual facilities.)

- that AI companies such as Meta, Google and OpenAI should indeed stop stealing other people’s art and creativity?

Engineers at Meta reportedly downloaded over 7.5 million books and 81 million research papers from online ‘pirate’ libraries such as Library Genesis to train their Llama 3 LLM AI. Meanwhile, in September 2025 the AI firm Anthropic reached a $1.5 billion settlement with a group of authors and publishers who claimed their books had been used without authorisation to develop its chatbot. In response to such expropriation, numerous creatives, including Paul McCartney, Dua Lipa and Kate Bush, have called for the existing copyright and licensing regulations to be strengthened. They have voiced their concerns about AI through campaigns such as that opposing the UK government’s plan to introduce a text and data-mining exception with regard to copyright. This proposal would have permitted AI firms to use copyrighted material without payment, permission or transparency about the works involved, albeit with an explicit opt-out option for writers, musicians and creative artists. The government had framed the plan as an attempt to strike a balance between the two sectors: on the one hand granting creatives greater control over how their work is used; on the other acknowledging that AI models must be developed and trained on pre-existing material if the artificial intelligence market is to continue to grow in the UK. Such was the backlash it received from the creative sector, however, that in March 2026 the government announced it was no longer in favour of the idea.

Yet when it comes to understanding AI and its implications for politics and art, we’re going to suggest that refusing to go along with the consensus is precisely what’s required – for reasons that have to do with the very nature of art and the political.

Let’s begin with art. In his 2025 book On Giving Up, the psychoanalyst Adam Phillips insists that:

The point of art being difficult is that it resists our easy appropriation of it. We can’t use it to consolidate our prejudices, or reinforce our assumptions and presumptions. Art of any value requires the kind of attention we don’t give to the taken-for-granted …. Art, in this sense, unsettles and disrupts preconceptions … ; art resists and sabotages our familiar habits and perception. … And the implication is that this drive to familiarize is like a drive towards death…

Phillips suggests that to feel alive we need to give up our ‘habitual tactics and techniques’ for deadening ourselves; and that art has traditionally been one of the key means of doing so. Yet art itself, Phillips insists, is now all too familiar to us. It is a familiarity that extends, we would argue, not only to painting, drawing and other forms of handmade or handcrafted art; nor simply to what Walter Benjamin called aurocratic art. It also includes avant-garde, machine-made and technologically (re)produced art. As such, art now belongs alongside work, clothes and furniture on that list of means of habitualization that was begun by the formalist literary critic Vicktor Shklovsky in his 1917 essay, ‘Art as Technique’. Indeed, for Phillips, ‘interruption’ in Shklovsky’s sense is ‘the only cure for familiarization’.

(It’s worth emphasising that this view of art is not confined to Phillips. In 1918 – just a year after Shklovsky – the poet Vladimir Mayakovsky announced that museums, as places where art is displayed, were ‘dead shrines’. And one hundred years before all of them the philosopher G.W.F. Hegel argued that art, at its highest level, belonged to the past, no longer holding the significant role in human culture it once did, having been surpassed in this respect by philosophy and reason.)

So what might such interruption look like today? In particular, how might we pay attention to – and perhaps even ‘defamiliarize’ (Shklovsky) and disrupt – our increasingly habitual, taken-for-granted ways of perceiving AI (and so choose life rather than continuing to deaden ourselves by repeating the usual prejudices and presumptions about it)?

Consider the point above concerning copyright. At first glance Big Content and Big AI appear to be at odds. Yet as the video essayist and writer Alexander Avila shows – in one of the few recent attempts to think differently about the issue to have appeared in the mainstream media – even when this is the case such opposition is often short-lived, with the two sides quickly joining together to the repeated disadvantage of artists, writers and musicians. Major music labels may present themselves as being aligned with creatives in fighting copyright infringement by AI companies, ostensibly to protect the interests of figures such as McCartney, but in reality the situation is more complicated. In 2024, Universal Music Group – alongside Sony Music Entertainment and Warner Records – filed a lawsuit against the AI song generators Suno and Udio over the unlicensed use of its recording catalogue. Soon after, however, it entered into partnership with one of these very companies, Udio.

Since Avila’s argument runs against the grain of what we typically hear from artists and their supporters, it’s worth following at a little more length. He shows how, across the music industry, large media conglomerates are using the backlash against AI to consolidate both their power and their profits. Exclusive licensing deals are struck with Big AI companies, promising new revenue streams for labels, while the songwriters, performers and musicians whose work is used to train the AI models – often without any option to opt out of the datasets – receive little or no compensation. The pattern resembles what happened with the development of streaming platforms, when ‘labels and studios pocketed the profits and left musicians, writers and actors behind’ – only it may now be unfolding on a larger scale still.

Even if AI firms are eventually required to pay for the data they use, there may be no real advantage in it for artists. Avila points out that major businesses such as Microsoft and Anthropic are well positioned to absorb licensing fees whereas smaller open-source AI start-ups are not. Paradoxically, efforts to regulate Big AI through copyright law may end up entrenching the dominance of these firms. Other measures put forward ‘in the name of “protecting artists”’ risk producing similar effects. Legislative initiatives such as the NO FAKES Act in the US, intended to regulate deepfakes, could result in granting studios greater control over performers’ digital likenesses rather than empowering performers themselves. Indeed, licensing regimes might see creators being required to sign over rights to their own data, including their voices and faces, simply to participate in the industry at all.

How is it that these proposals leave so much to be desired as far as creatives are concerned? Avila goes on to explain that a lot of these copyright lawsuits, licensing solutions and digital replica rights function as ‘Trojan horses’ for Big Content. He gives the example of the Copyright Alliance, a prominent non-profit that promotes the ‘interests of the “copyright community”’, lobbying for strong protections in response to the development of AI. Although it presents itself as a champion of individual creatives, its leadership is actually dominated by executives from major media conglomerates such as Paramount, NBC Universal, Disney and Warner Bros.

Why, then, the highly visible push for alliances with creatives when these entertainment companies could easily secure lucrative deals with AI firms behind closed doors? Quite simply, it’s because large media corporations are dependent on artists. Their business models rely on creative labour – the time, knowledge and expertise of artists – to generate profit; their lobbying efforts require the backing of sympathetic artists such as Elton John and Florence Welch to have any credibility; and their new partners in the AI industry of course depend on access to artists’ work itself to develop their models.

Viewed from this perspective, relying on many of our most familiar assumptions about AI and its relation to creatives and copyright law begins to appear rather misguided, to say the least. Following Phillips and Shklovsky, we might argue that, rather than robotically taking them for granted and repeating them, it’s these assumptions themselves that we need to pay attention to, resist and perhaps even sabotage.

Something similar can be said about the attempt to understand AI by algorithmically parroting the political philosophies of the past. As we know from thinkers as different as Ernesto Laclau and Chantal Mouffe, Alberto Moreiras and Wendy Brown, the political is not a programme – be it liberal, conservative, feminist, socialist, Marxist, post-Marxist, anarchist, environmentalist, libertarian – that can be rolled out like an operating manual and applied to every situation and circumstance. In Mouffe’s words, the political is a decision that is always ‘taken in an undecidable terrain’. It is ‘this ‘moment of “decision” that characterizes the field of politics.’ For Mouffe (in a line of thought that stretches at least as far back as Phillippe Lacoue-Labarthe, Jean-Luc Nancy and Jean-François Lyotard), this is also what distinguishes ‘“the political” – referring to the dimension of antagonism, inherent to human societies – and “politics” – or the assemblage of practices and institutions that attempt to establish an order, to organise human coexistence in the context of the conflicts generated by “the political”.’

(As we have made clear elsewhere, the political is a decision ‘taken in an undecidable terrain’, for Mouffe, ‘because social relations are not fixed or natural’; nor are they the outcome of ‘objective and immutable economic or historical processes and practices’. Rather, they are the continually produced through ‘precarious, hegemonic, politico-economic articulations’: in other words, through ‘contingent, pragmatic yet temporary decisions involving power, conflict and violence’. Similarly, in Against Abstraction: Notes from an Ex-Latin Americanist, Moreiras defines infrapolitics as the ‘attempt to think or rethink politics from the region of the ontico-ontological difference’. Infrapolitics should therefore be conceived as ‘the only properly political interrogation of politics (the rest is a program).’ Meanwhile, as we have also shown elsewhere, Wendy Brown uses the term anti-political moralism in Politics Out of History to describe a ‘certain “resistance” to thinking and intellectual inquiry’ among many of those on the left ‘who either refuse or are unable to give up their devotion to previously held notions of politics’.)

It's true some have argued that criticising critiques of AI as robotic and algorithmic has itself become a robotic and algorithmic thing to do. Yet this line of reasoning is almost invariably deployed to justify sticking with the prevailing deathly (what we’ll see below described as modernist/colonial, liberal and humanist) ways of working and thinking. The result? Nothing changes.

In fact, this may precisely be a strategy for ensuring nothing changes. As Jean-Paul Sartre is quoted as saying: ‘“The rebel… secretly … wants the world and the system to remain as it is. Its permanence, after all, is the guarantee of his [sic] continuing ability to ‘rebel’”. In other words, many so-called radicals are comfortable with society as it stands, because it allows them to endlessly critique it, and unite in solidarity with others in that critique, without having to alter their own ways of being. This conservativism, when projected into the public realm, also helps explain the caricature of the smug progressive left blamed for the Democrats’ failures in the US – Zohran Memdani excepted – and Kier Starmer’s enduring lack of popularity in the UK.

What if we approached AI differently, though: as precisely offering us a chance to take decisions in an undecidable terrain about what AI, art, the political, and their relation to the world, actually are? In this sense, AI could be viewed as an invitation to reexamine and potentially rethink these categories rather than simply rehearsing already well-known positions and language. Could we even see AI as providing an opportunity to radically reinvent what it means to be human in more generous and interdependent ways – ways that will actually enable us to feel more positively alive in the crisis-ridden world of the early 21st century? Such crises include not only the planetary crisis, environmental crisis and civilizational crisis of Western liberal democracy, but the related crisis of modernity itself: that is of a particular mode of being-in or being-with the world.

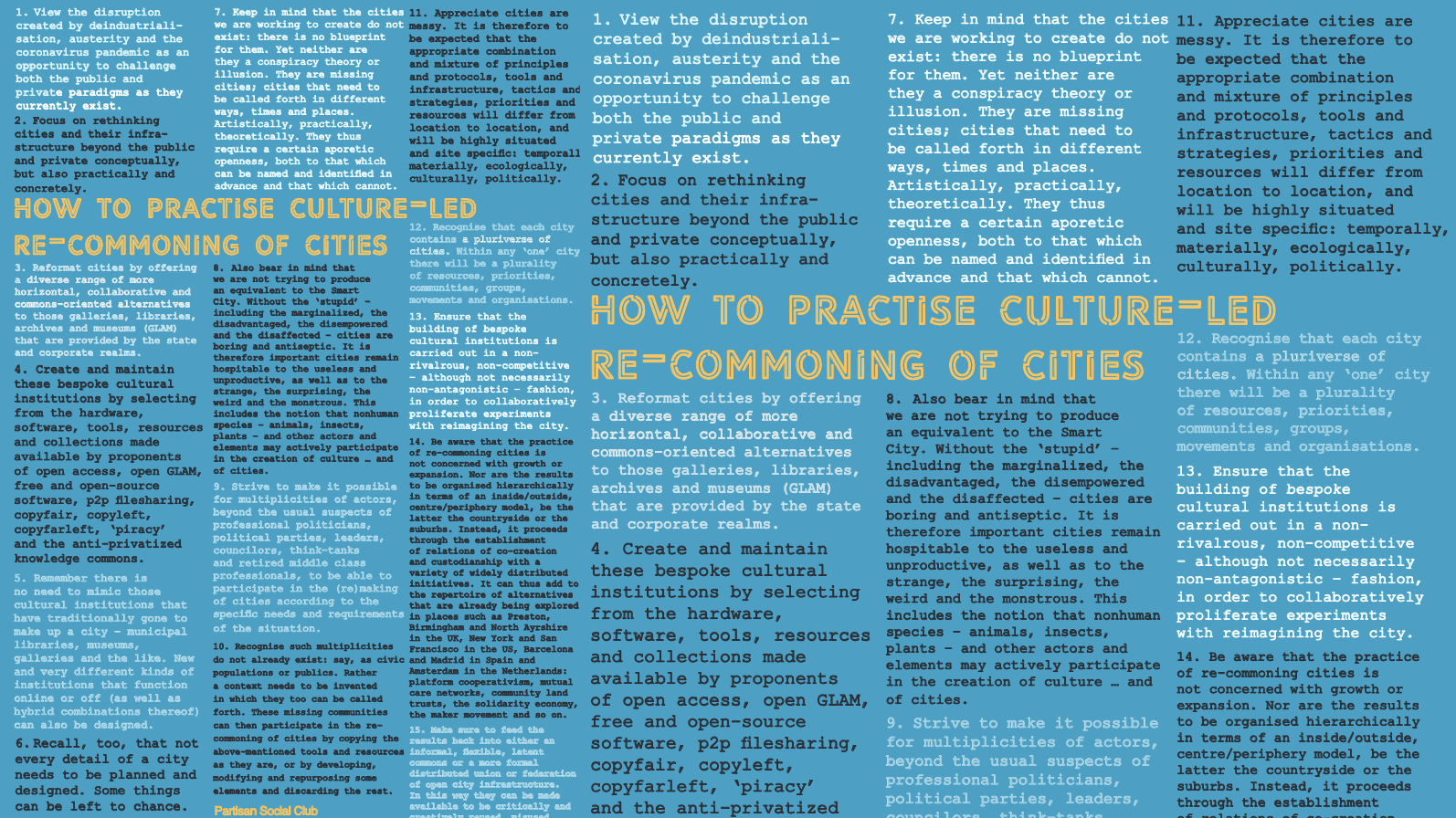

One of the latest norm-critical projects of my collaborators and I explores this possibility through a set of badges responding to the pro-human movement across the cultural industries. Like the Human Authored scheme launched by The Society of Authors, this movement is reacting to the increasing prevalence of AI-generated music, images and text by reaffirming the common-sense belief that genuine art can only be produced by human individuals through flashes of inspiration born of lived personal experience. Badges like ‘100% Human Made’ are used to certify and label such ‘organic’, AI-free works as having been ‘created with human intelligence’.

The 100% Inhuman Made badges project takes a different approach. Developed by an assemblage that includes the design-art collective COPODE, it challenges the assumption that any creative or intellectual work can ever be purely human, on the basis that many distributed, collaborative and infrastructural elements are also necessarily involved, human and nonhuman, including what are often hidden forms of labour. Rather than ‘100% Human Made’, its badges – which can be found at the 100% Inhuman Badge Generator here – therefore carry messages such as ‘100% Inhuman Made: Some AI Used’.

The project works differently in practice, too. Like many of its pro-human counterparts, 100% Inhuman Made invites you to make your own versions of its badges – with a twist. When viewed online at the original URL, every version of the badge is different. This is because, each time the 100% Inhuman Badge Generator is clicked, a new design is produced, with the old badge disappearing unless actively saved. So no badge is the first, last or original. Each is irreducibly singular, appearing and disappearing without archive or guarantee. This means there is no master copy, no final form. There is only a continuous unfolding of variations that resist fixation into authorship or ownership (no matter that these concepts are deeply embedded in our systems of publishing and copyright, which depend on identifiable, stable objects).

(Want to give it a try? Go to the 100% Inhuman Badge Generator and just click on the badge to produce a design. Be warned: the previous version will vanish forever! Press ‘s’ on your keyboard if you want to save the design as a jpg.)

In this sense, the 100% Inhuman Made project is not simply a critique but is itself a creative practice. It offers an experimental intervention into what are too often taken-for-granted ideas of creativity, encouraging a shift away from outdated, anthropocentric myths of individual authorship, ownership and originality – myths that, however well-intentioned and artist- and writer-supporting they may appear, continue to prop up extractive, human-exceptionalist worldviews, with harmful social and environmental consequences.

In addition, the ‘100% Inhuman Made’ badges are released on a responsibly open, CC2r: Collective Commitment to Reuse basis. CC2r is not a traditional transactional contract or legal licence to be attached to a work upon completion. Instead, it recognises that texts and artworks always emerge from complex, ongoing, collective processes – again both human and nonhuman – and invites others to participate in sustaining those processes. As its name suggests, CC2r is therefore a commitment. It is a commitment to: ‘(i) building and supporting a practice of interdependency and solidarity by cultivating a collective ground from which to commit and act; (ii) sharing and reusing generously and continuously; (iii) refraining from sharing and reusing when it would mean harmful extraction’.

So feel free to take the badges, copy them, make them available, share them, tell others about them, refer to them, create communities around them. You can print them off, turn them into stickers, posters, bookmarks, labels, stamps, certificates, logos – whatever forms they want to pass through. Or you can use the underlying software code, likewise released on a responsibly open CC2r: Collective Commitment to Reuse basis that ‘supports generosity toward the work done before and after us’ (and is involved in ‘collectively figuring out what is meant by "your" and by "owning"’), to create your own badges and designs. The code can be found here. Reuse it, modify it, fork it.

You don’t need to ask. There’s no campaign to sign up to. Nowhere to register. No organisation – trade or otherwise – to follow. The project is designed to spread through practice and collective reuse rather than formal membership.

Statements provided along with the badges (also on an CC2r basis) underscore the project’s claim: that the work does not originate in the isolated genius of an ‘authentic’ human individual, but emerges from a complex tapestry of human and nonhuman actors, elements, histories, systems and settings. You can choose from a straight, activist, playful, or ironic version – or you can write your own.

This work is not “100% human made” – it is 100% inhuman made.

By using this badge, I affirm it was created not through a spark of individual human inspiration born of authentic lived experience – a claim to originality that, from a decolonial trans*feminist perspective, has been likened to a form of settler colonial violence – but through messy, entangled, collective means. Like all creative and intellectual work, it has emerged from a deeply rooted assemblage of human and nonhuman entities, forces and materialities: bodies and minds, texts and tools, machines and technologies, institutions and infrastructures, software code and server farms, ecological systems and digital networks – and, yes, on occasion, AI.

Straight version

A 100% Inhuman Made badge is an invitation to all of us to reconsider what we mean by ‘the human’ and its relation to the world – particularly in the light of the climate crisis and rise of AI. What’s more, it encourages us to do so in several senses of the term: the human as a member of the human species; as someone capable of ‘humaneness’, ‘humanity’ and human feeling for others; and as a subject who holds human values, is governed by human laws and norms, and upon whom human rights regarding life, liberty, property and citizenship can be conferred.

As such, feel free to display one of these badges on your books, articles, artworks, podcasts, newsletters or any other platforms to signal that you are opposed to human-centred – and ultimately ecologically damaging – notions of authorship and creativity, no matter how well-intentioned and artist-supporting they may appear. Add one to your bio, your book cover, your artwork, your email signature, your website footer: anywhere you’d normally pretend you made it all by yourself.

Activist

The 100% Inhuman Made badges call on us to reconsider what we mean by ‘the human’ and how it relates to the world – especially in the context of the climate crisis and rapid expansion of AI. They urge us to question the human in multiple senses: the human as a member of a human species; as someone capable of ‘humaneness’, ‘humanity’ and human feeling for others; and as a subject that upholds human values, is governed by human laws and norms, and upon which human rights regarding life, liberty, property and citizenship can be conferred.

Use these badges on your books, artworks, newsletters, podcasts or websites to take a stand against outdated, anthropocentric myths of authorship and originality. However well-intentioned, such ideas continue to prop up extractive, human-exceptionalist worldviews that fuel social and environmental harm. Signal your ongoing commitment to messy, multiple, inhuman creativity – and to worldmaking beyond capitalist and colonial domination and expropriation. Put them on your About page, your credits, your masthead: anywhere you’d normally pretend you made it all by yourself.

Ironic

Feel free to stick these badges on your book, podcast or lovingly handcrafted digital zine to reassure the world you’re not clinging to the destructive myth of the lone individual human genius. Because nothing says 21st-century creativity like denying the human and nonhuman collectivity humming beneath your cursor. Use them in your bio, your masthead, your About page: anywhere you’d normally pretend you made it all by yourself.

Playful

Stick these badges on your artwork, book, newsletter, podcast, wherever you make things, as a friendly nudge that you’re not buying into those old-school, human-only, planet-wrecking ideas of authorship and creativity. Sure, they sound noble, but we know better than that by now. Bio, sidebar, About page – go wild! Use them anywhere you’d normally pretend you made it all by yourself.

The 100% Inhuman Made badges project is of course a creative and critical response to the growing pro-human movement in art and culture and its attempt to defensively reinforce normative assumptions about individual human authorship and ownership at a time when these ideas are being disrupted by the wide-spread adoption of AI. Numerous initiatives exemplify this trend. In addition to those already mentioned, there is the Human Artistry Campaign, with its conviction that only ‘humans are capable of communicating the endless intricacies, nuances, and complications of the human condition through art’. (As the political scientist Yascha Mounk has noted, however, arguments of this kind are somewhat circular in their logic, resting as they do on the belief that ‘AI systems cannot be intelligent or creative because only texts or works of art produced by humans are instances of intelligence or creativity.’)

Creative Commons (whose licenses are often required by funding agencies for the release of academic research outputs as part of open access mandates) has even launched a Keep the Internet Human 25th Anniversary campaign on the grounds that the ‘internet was made for humans by humans.’

The Hogman(Ai) Murder

There is also the artwork by Ashley Rawson (aka the ‘AI Assassin’), calling for the end of AI art. It consists of a three-dimensional portrait titled The Hogman(Ai) Murder depicting an AI-generated Highland cow skewered by a knife.

A still further example is provided by author Paul Kingsnorth. He is opposing what he calls the ‘Ignorance Machine of Artificial Intelligence’ by initiating the Writers Against AI campaign. The accompanying manifesto of ‘refusal and resistance’ urges those in agreement to make 3 promises:

I will not use AI in my work as a writer

I will not support writers who use AI in their work.

I will support writers, illustrators, editors and others in related fields whose work is entirely human-made [as if the latter were even possible].

Kingnorth’s The Abbey of Misrule Substack also offers downloadable logos for both Writers Against AI and Readers Against AI to display in support of the campaign.

Still, it’s important to stress that, while the 100% Inhuman Made project may be understood as a modest attempt to give pause to this pro-human tendency, it is not concerned with undermining the human or otherwise turning away from it in favour of the nonhuman or a condition of humanlessness. In its own small way, the initiative (which, like Kingsnorth’s, is without funding, a formal plan or any campaign or policy leadership ambitions – so that’s something we can agree on at least), is indeed endeavouring to help us relinquish many of our habitual tactics and techniques for deadening ourselves, and in so doing to safeguard the future of the human and nonhuman – undecidable, unpredictable and uncertain though that future may be. For example, as the climatological and ecological emergency intensifies, the social justice activist Naomi Klein is one of many recent thinkers to have emphasised the need to reimagine our modes of relation. The Euro-Western worldview that treats ‘nature’ as a separate, lesser realm – something for (certain) humans to manage, exploit or even heroically protect – is no longer fit for purpose. ‘[T]here is an intimate relationship between our overinflated selves and under-cared-for planet’, she writes in her bestselling book, Doppelgänger: A Trip into the Mirror World. If ‘the climate crisis can be understood as a surplus of heat-trapping gases in the atmosphere; it can also be understood as a surplus of self’. What’s needed is a very different ethos.

That said, we need to take care when it comes to reimagining our modes of relation – especially when it comes to artificial intelligence. As Klein’s critique of the ‘architects and boosters of generative AI’ demonstrates in an article published the same year as Doppelgänger (2023) – ‘AI Machines Aren’t Hallucinating. But Their Makers Are’ – it’s all too easy to repeat the familiar Euro-Western, modernist, liberal and humanist categories that are a major part of the problem.

In many respects it’s hard to disagree with Klein’s clinical dismantling of the ‘utopian hallucinations’ of Silicon Valley CEOs and their fans here: that generative AI will end poverty and loneliness, irradicate disease, solve the climate and extinction crises, provide wise governance and policy making, and free us from boring, unfulfilling work. Yet, like a lot of accounts of the dystopian, even nihilist future of AI, Klein’s analysis still takes a masked, modernist-left liberal humanism as the position from which everything else is to be analysed and judged. This extends to her conceptions of copyright, creativity and privacy rights (which are really quite limited and conventional).

Whereas, in its unsettling of the belief that art and culture must stem solely from the creativity of human individuals – and in opening us up to an expanded notion of intelligence that is not delimited by anthropocentrism – might AI not represent an opportunity for ‘we leftists’, as Klein puts it, even more radical and transformatory than those she points to but quickly discounts? And might this be the case for all Klein dismisses AI’s most exciting promises for good reason: because in order for AI to truly ‘benefit humanity, other species and our shared home’, it ‘would need to be deployed inside a vastly different economic and social order than our own’, one whose purpose was to meet ‘human needs’ and protect ‘the planetary systems that support all life’.

This is where the example of the climate crisis is so instructive. Our prevailing romantic and extractive attitude toward the environment presents it – much as many position the work of artists and writers in the face of AI – as either passive background to be protected (e.g., from pollution, habitat destruction, loss of biodiversity) or freely accessible Lockean resource available to be exploited for wealth and profit. It is a stance that is underpinned by a modernist/colonial ontology based on the separation of nature from culture, living from non-living, human from nonhuman. Yet isn’t it this very ontology of separation, and the associated liberal, humanist values, that artificial intelligence might, just might, push us to resist and eventually move beyond?

The inhuman of the 100% Inhuman Made badges project, by contrast, does not signify so much a negation of the human – a pejorative sense often tied to exclusionary, racialised and ableist hierarchies – as an undermining of any naïve ontological division between human and nonhuman (and indeed subhuman, unhuman or inhumane).[iv] The inhuman, for us, is not about denying support to artists and authors: it is about unlearning the possessive, liberal human individual and generating other, non-modernist, non-liberal and nonhumanist possibilities for subjectivity, creativity and intelligence – and for radically redistributing resources and opportunities accordingly. The in of inhuman is thus what both separates and joins the human and nonhuman. It may signal challenge and opposition: as in the inhuman versus the human and humanism. But it also signals closeness and intimacy: as in within, interior. Accordingly, ‘there is no such thing as the nonhuman … or the human for that matter. Not in any simple sense. Each is born out of its relation to the other. The nonhuman is therefore already in the human – in(the)human.’

To be clear, the 100% Inhuman Made badges project is not a celebration of technological supremacy; nor does it constitute an abandonment of situated thought and critique – even if it is concerned not to let normative conceptions of the human determine the scope and horizon of that thought and critique. It is better appreciated as a shift in orientation: from modernity’s acting on or even on behalf of the world to non-modernist, non-capitalist, non-liberal humanist modes of acting in, with and (as part) of the world.

It is also about moving beyond the idea of knowledge as mastery, as something applied to a passive world. Instead, as we transition from knowing-about to knowing-with, we learn to collaborate as part of complex, multifaceted systems and technologies, communities and ecologies. We become more responsive and experimental, open to being vitally surprised by our undecidable and potentially radically different future, rather than treating it as an algorithmic repetition of the past.

With regard to artificial intelligence specifically, we do not claim AI is neutral: far from it. We recognise that AI is deeply bound up with the extractive, exploitative and dehumanizing logics of capitalism and colonialism. Such deadening logics are perhaps most apparent in its current deployment by the US and Israel against Iran, in what’s been dubbed the first ‘AI war’. However, they can be detected even in many of its public-infrastructure, small language model, open-LLM and open-source forms. (We’re post-open source now, right?)

Yet neither do we believe that a hierarchical dichotomy with the nonhuman that is AI – whether it adopts the form of political moralism or human exceptionalism – is a viable position from which to think and make otherwise. Consider the now widespread critique of artificial intelligence’s own environmental impact, particularly the strain placed on resources by Big AI’s massive data centres. While many writers have drawn attention to this issue, very few of those who are against the use of AI have acknowledged another, equally pressing concern: the ecological toll of those traditional human-centered creative industries with which many of these self-same critics are closely involved, such as print-on-paper publishing. Yet as has been emphasised elsewhere:

In the USA, Japan, and Europe an average person uses between 200 and 250 kilos of paper every year… Producing 1 kilo of paper requires 2-3 times its weight in trees. If everyone used 200 kilos of paper per year there would be no trees left. It takes between 2 and 13 liters of water to produce a single A4-sheet of paper, depending on the mill. The pulp and paper industry is the single largest industrial consumer of water in Western countries.

Against this backdrop, a 100% Inhuman Made badge is less a label than an invitation: to reconsider what we mean by creativity, authorship and the human itself in a moment shaped by both AI and intensifying ecological and climatological crisis.

The 100% Inhuman Made badges project can thus itself be considered a living performance of inhuman creativity – one that seeks to interrupt the familiar (deadly) impulse to take refuge from AI in intuitive human craft and skill.

It is a performance that foregrounds radical ontological relationality over idealised romantic originality; heterogeneous human+nonhuman co-creation over individualistic human genius.

Made-with the world.

Made-with technology.

Made-with AI.

If this invitation resonates, the next step is straightforward: generate a badge, attach it, use it, adapt it, circulate it, incorporate its message into your ongoing creative processes. No permission required.

---

COPODE is a Collective for Potential Design. COPODE does form engineering through experiments in the production of meaning. COPODE is Nigel Power & Mat Ranson. For more, see COPODE’s limited edition Metalabel book, Chance Would Be A Fine Thing.